Here’s some things that consultants do that annoy me.

Some consultants brag about who is backing their company or whom they claim as their customers. I’ve never figured that rich people are any smarter than poor people so I’m not impressed by consultants who brag about who is backing them or who founded their company. Recent ponzi and hedge fund implosions confirm my thinking. And it seems like the really smart people who invented technology 1.0 and made a billion are not reliably repeating their success with technology 2.0. It happens, but not predictably, so mentioning that [insert famous web 1.0 person here] founded or is backing your company is a waste of a slide IMHO.

I’m also not impressed by consultants who list [insert Fortune 500 here] as their clients. Perhaps [insert Fortune 500 here] has a world class IT operation and the consultant was instrumental in making them world class. Perhaps not. I have no way of knowing. It’s possible that some tiny corner of [insert Fortune 500 here] hired them to do [insert tiny project here] and they screwed it up, but that’s all they needed to brag about how they have [insert Fortune 500 here] as their customer and add another logo to their power point.

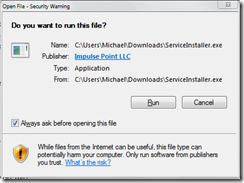

I’m really unimpressed when consultants tell me that they are the only ones who are competent enough to solve my problems or that I’m not competent enough to solve my own problems. One consulting house tried that on me years ago, claiming that firewalling fifty campuses was beyond the capability of ordinary mortals, and that If we did it ourselves, we’d botch it up. That got them a lifetime ban from my address book. They didn’t know that we had already ACL’d fifty campuses, and that inserting a firewall in line with a router was a trivial network problem, and that converting the router ACL’s to firewall rules was scriptable, and that I already written the script.

I’ve also had consultants ‘accidently’ show me ‘secret’ topologies for the security perimeters of [insert fortune 500 here] on their conference room white board. Either they are incompetent for disclosing customer information to a third party, or they drew up a bogus whiteboard to try to impress me. Either way I’m not impressed. Another lifetime ban.

Consultants who attempt to implement technology or projects or processes that the organization can’t support or maintain is another annoyance. I’ve see people come in and try to implement processes or technologies that although they might be what the book says or what every one else is doing, aren’t going to fit the organization, for whatever reason. If the organization can’t manage the project, application or technology after the consultant leaves, a perceptive consultant will steer the client towards a solution that is manageable and maintainable. In some cases, the consultant obtained the necessary perception only after significant effort on my part with the verbal equivalent of a blunt object.

Recent experiences with a SaaS vendor annoyed me pretty badly when they insisted on pointing out how great their whole suite of products integrate, even after I repeatedly and clearly told them I was only interested in one small product, and they were on site to tell me about that product, and nothing else. “I want to integrate your CMDB with MY existing management infrastructure, not YOUR whole suite. Next slide please. <dammit!>”. Then it went down hill. I asked them what protocols they use to integrate their product with other products in their suite. The reply: a VPN. Technically they weren’t consultants though. They were pre-sales.

That’s not to say that I’m anti consultant. I’ve seen many very competent consultants who have done an excellent job. At times I’ve been extremely impressed.

Obviously I’ve also been disappointed.