A simple analysis of system availability can be broke down into to two ideas. First, how long will I go, on average, before I have an unexpected system outage or unavailability (MTBF). Second, when I have an outage, how long will it take to restore service.

Any discussion of availability, MTBF, MTTR can quickly descend into endless talk about exact measurements of availability and response time. That sort of discussion would be appropriate in cases where you have availability clauses in contractual obligations or SLA's. What I'll try to do is frame this as a guide to maintaining system availability for an audience that isn't spending consulting dollars, and who is interested in available systems, not SLA contract language.

Less failure means longer MTBF

What can I do to decrease the likelihood of unexpected system down time? Here's my list, in the approximate order that I believe they affect availability.

Structured System Management. You gain availability if you manage your systems with some sort of structured processes or framework. As a broad concept, this means that you have the fundamentals of building, securing, deploying, monitoring, alerting, and documenting networks, servers and applications in place, you execute them consistently, and you know all cases where you are inconsistent. (As compared to what I call ad hoc management, where every system has it own plan, undocumented and inconsistent, and you don't know how inconsistent they are, because you've never looked.) This structure doesn't need to be a full ITIL framework that a million dollars worth of consultants dropped in your lap, but it has to exist, even if only in simple, straightforward wiki or paper based systems.

Stable Power, UPS, Generator. You need stable power. Your systems need dual power supplies or they need to be redundant, the redundant power supplies need to be on separate circuits, you need good quality UPS's with fresh batteries, and depending on your availability requirements you may need a generator. In some places, even in large US metro areas, we've had power failures several times per year, and in cases where we had no generator, we had system outages as soon as the UPS's ran out.

Good Hardware. I'm a fan of tier 1 hardware. I believe that tier one vendors add value to the process of engineering and servers, storage and network hardware. That means paying the price, but that also means that you generally get systems that are intentionally engineered for performance and availability, rather than randomly engineered one component at a time. A tier one vendor with a 3 year warranty has a financial incentive to build hardware that doesn't fail.

Buying into tier one hardware also means that in the case of servers, you get the manufacturers system management software for monitoring and alerting, you generally some form of predictive failure, and you get tested software and component compatibility.

Tier one hardware has worked very well for us, with one exception. We have a clustered pair of expensive 'enterprise' class servers that have had more open hardware tickets on them than any 30 of our other vendors' servers.

Good logging monitoring and management tools. Modern hardware and software components try really hard to tell you that they are going to fail, sometimes even a long time before they fail. ECC memory will keep trying to correct bit errors, hard disks will try to correct read/write errors, and all those attempts get logged somewhere. Operating systems and applications tend to log interesting things when they think they have problems. Detecting the interesting events and escalating them to e-mail, SMS or pagers gives you a fair shot at resolving a problem before it causes an outage. I'd much rather get paged by a server that thinks it is going to die, rather than from one that is already dead.

Reasonable Security. A security event is an unavailability event. The Slammer Worm was an availability problem, not a security problem. A security event that results in lost or stolen data will also be an availability event.

Systematic Test and QA. You have the ability to test patches, upgrades and changes, right? Any offline test environment is better than no test environment, even if the test environment isn't exactly identical to production. But as your availability requirements go up, your test and QA become far more important, and at higher availability requirements, test and QA that matches production as closely as possible is critical.

Simple Change Management. This can be really simple, but it is essential. A notice to affected parties, even if the change is supposed to be non-disruptive, a checklist if exactly what you are going to do, and a check list of exactly how you are going to undo what you just did are the first steps. You need to know who changed what and when and why they changed it. If you have no change process, a simple system will improve availability. If you already have a system, making it more complex might or might not improve availability

Neat cabling and racks. You cannot reliably touch a rack that is a mess. You'll break something that wasn't supposed to be down, you'll disconnect the wrong wire and generally wreak havoc on your production systems.

Installation Testing. You know that server has functional network multipathing and redundant SAN connections because you tested them when you installed them, and you made sure that when you forced a path failure during your test you got alerted by your monitoring system, right?

Reducing MTTR

Once the system is down, the clock starts ticking. Obviously your system is unavailable until you resolve the problem, so any time shaved of the problem resolution part of the equation increases availability.

Structured Systems Management and Change Management. You know where your docs are, right? And you can access them from home, in the middle of the night, right? You also know what last changed and who changed it, right? You know what sever is on what jack on what switch, right? Finding that out after you are down adds to your MTTR.

Clustering, active/passive fail over. In many cases, the fastest MTTR is a simple active passive fail over. Pairs of redundant firewalls, load balanced app servers, and clustered database and file servers do not improve your MTBF. They may, in fact, because of the complexity factor, increase your likelihood of failure. But when properly configured, they greatly decrease the time it takes to resolve the failure. A failed component on a non-redundant server is probably an 8 hour outage, when you consider the time to detect the problem alert on call staff in the middle of the night, call a vendor, wait for parts and installation, and restart the server. That would likely be a 3 minute cluster fail over on a simple active/passive fail over pair.

Failover doesn't always work though. We have situations where the problem was ambiguous, service is affected, but the clustering heartbeat is not affected, so the inactive device or server doesn't come on line. In that case, the MTTR is the time that is take for your system manager to figure out that the cluster isn't going to fail over on its own, remote console in to the lights-out board on the mis-behaving server, and disable it. The passive node then has an unambiguous decision to make, and odds are it will make the decision to become active.

Monitoring and logging. Your predictive failure messages, syslog messages, netflow logs, firewall logs, event logs and perfmon data will all be essential to quick problem resolution. If you don't have logging and alerting on simple parameters like high CPU, disks full, memory ECC error counts, process failures, of if you can't readily access that information, your response time will be increased by the amount of time it takes to gather that simple data.

Paging, on-call. You people need to know when your stuff fails, and if they are on call, they need a good set of tools at home, ready to use. Your MTTR clock starts when the system fails. You start resolving the problem when your people are connect to their toolkit, ready to start troubleshooting.

Remote Consoles and KVM's. You really need remote access to your servers, even if you work in a cube right next the data center. You don't live next to the server, right? And you probably only work 12 hours a day, so for the other 12 hours a day you need remote access.

Service Contracts and Spares. Your ability to quickly resolve an outage likely will depend on how soon you can bring your vendors tech support on line. That means having up to date support contracts, with phone numbers and escalation processes documented and readily available. It also means that you need to need to have appropriate contracts in place at levels of support that match your availability requirements. Your vendors need to be on the hook at least as bad as you are, and you need to have worked out the process for escalating to them before you have a failure.

Tested Recovery. You need know, because you have tested and documented, how you are going to recover a failed sever or device. You cannot figure it out on the fly. Your MTTR window isn’t long enough.

Related Posts:

Estimating Availability of Simple Systems – Introduction

Estimating Availability of Simple Systems - Non-redundant

Estimating Availability of Simple Systems - Redundant

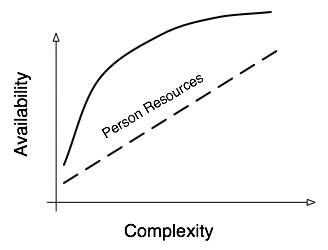

Availability, Complexity and the Person-Factor

(2008-07-19 -Updated links, minor edits)