Some notes on scaling a large online learning application.

09/29-2008 - Updated to correct minor grammatical errors. Stats:

- 29 million hits per day, 700/second

- 14 million .NET active content pages per day[1]

- 900 transactions per second

- 2000 database queries per second

- 20 million user created content files

- Daily user population of over 100,000

- Database server with 16 dual core x64 CPU's, 128GB RAM, Clustered

- Nine IIS application servers, load balanced

- The largest installation of the vendors product

Breadth and complexity. The application is similar to a comprehensive ERP application, with a couple thousand stored procedures and thousands of unique pages of active web content covering a full suite of online learning applications, including content creation and delivery, discussions, quizzing, etc. The application has both breadth and depth, and is approximately as complex as a typical ERP application. This makes tuning interesting. If a

quarter million dollar query pops up, it can be tuned or re-designed, but if the load is spread more or less evenly across dozens or hundreds of queries & stored procedures, the opportunities for quick wins are few.

Design. Early design decisions by the vendor have been both blessings and curses. The application is not designed for horizontal scalability at the database tier. Many normal scaling options are therefore unavailable. Database scalability is currently limited to adding cores and memory to the server, and adding cores and memory doesn’t scale real well.

The user session state is stored in the database. The original version of the application made as many as ten database round trips per web page, shifting significant load back to the database. Later versions cached a significant fraction of the session state, reducing database load. The current version has stateless application servers that also cache session state, so database load is reduced by caching, but load balancing decisions can be still made without worrying about user session stickiness. (Best of both worlds. Very cool.)

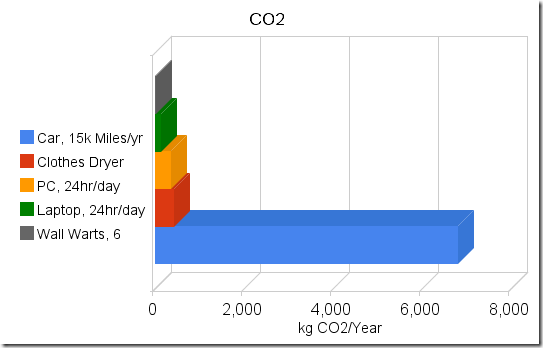

Load Curve.

The load curve peaks early in the semester after long periods of low load between semesters. Semester start has a very steep ramp up, with first day load as much as 10 times the load the day before (See chart). This reduces opportunity for tuning under moderate load. The app must be tuned under low load. Assumptions and extrapolations are used to predict performance at semester startup. There is no margin for error. The app goes from idle to peak load in about 3 hours on the morning of the first day of classes. Growth tends to be 30-50% per semester, so peak load is roughly predicted at 30-50% above last semester peak.

Early Problems

Unanticipated growth. We did not anticipate the number of courses offered by faculty the first semester. Hardware mitigated some of the problem. The database server grew from 4CPU/4GB RAM to 4CPU/24GB, then 8CPU/32GB in 7 weeks. App servers went from four to six to nine.

Database fundamentals: I/O, Memory, and problems like ‘don’t let the database engine use so much memory that the OS gets swapped to disk’ were not addressed early enough.

Poor monitoring tools. If you can’t see deep in to the application, operating system and database, you can’t solve problems.

Poor management decisions. Among other things, the project was not allowed to draw on existing DBA resources, so non-DBA staff were forced to a very steep database learning curve.

Better take the book home tonight, 'cause tomorrow you're gonna be the DBA. Additionally, options for restricting growth by slowing the adoption rate of the new platform were declined, and critical hosting decisions were deferred or not made at all.

Unrealistic Budgeting. The initial budget was also very constrained. The vendor said 'You can get the hardware for this project for

N dollars'. Unfortunately

N had one too few zero’s on the end of it. Upper management compared the vendor's

N with our estimate of

N * 10. We ended up compromising at

N * 3 dollars, having that hardware only last a month & within a year and a half spending

N * 10 anyway.

Application bugs. We didn’t expect tempDB to grow to 5 times the size of the production database and we didn’t expect tempDB to be busier than the production database. We know from experience that SQL Server 2000 can handle 200 database logins/logouts per second. But just because it can, doesn’t mean it should. (The application broke its connection pooling.)

32 bits. We really were way beyond what could rationally be done with 32 bit operating systems and databases, but the application vendor would not support

Itanic Itanium at all, and SQL 2005 in 64 bit mode wasn’t supported until recently. The app still does not support 64 bit .NET application servers.

Query Tuning and uneven index/key distribution. We had parts of the database were the cardinality looked like a classic long tail problem, making query tuning and optimization difficult. We often had to make a choice of optimizing for one end of the key distribution or the other, with performance problems at whatever end we didn't optimize.

Application Vendor Denial. It took a long time and lots of data to convince the app vendor that not all of the problems were the customer. Lots of e-mail, sometimes rather rude, was exchanged. As time went on, they started to accept our analysis of problems, and as of today, are very good to work with.

Redundancy. We saved money by not making the original file and database server clustered. That cost us in availability.

Later Problems

Moore's Law. Our requirements have tended to be ahead of where the hardware vendors were with easily implementable x86 servers. Moore's Law couldn’t quite keep up to our growth rate. Scaling x86 SQL server past 8 CPU’s in 2004 was hard. In 2005 there were not very many options for 16 processor x86 servers. Scaling to 32 cores in 2006 was not any easier. Scaling to 32 cores on a 32 bit x86 operating system was beyond painful. IBM’s x460 (x3950) was one of the few choices available, and it was a painfully immature hardware platform at the time that we bought it.

The “It Works” Effect. User load tended to ramp up quickly after a smooth, trouble free semester. The semester after a smooth semester tended to expose new problems as load increased. Faculty apparently wanted to use the application but were held back by real or perceived performance problems. When the problems went away for a while they jumped on board, and the next semester hit new scalability limits.

Poor App Design. We had a significant number of high volume queries that required re-parsing and re-optimization on each invocation. Several of the most frequently called queries were not parameterizable and hence had to be parsed each time they were called. At times we were parsing hundreds of new queries per second, using valuable CPU resources on parsing and optimizing queries that would likely never get called again. We spent person-months digging onto query optimization and building a toolkit to help dissect the problem.

Database bugs. Page latches killed us. Tier 3 database vendor support, complete with a 13 hour phone call and Gigs of data finally resolved a years old (but rarely occurring) latch wait state problem, and also uncovered a database engine bug that only showed up under a particularly odd set of circumstances (

ours, of course). And did you know that when 32-bit SQL server sees more than 64GB of RAM it rolls over and dies? We didn't. Neither did Microsoft. We eventually figured it out after about 6 hours on the phone with IBM Advanced Support, MS operating system tier 3 and MSSQL database tier 3 all scratching their heads. /BURNMEM to the rescue.

High End Hardware Headaches. We ended up moving from a 4 way HP DL580 to an 8-way HP DL740 to 16-way IBM x460's (and then to 32 core x3950's). The x460's and x3950's ended up being a maintenance headache, beyond anything that I could have imagined. We hit motherboard firmware bugs, disk controller bugs, had bogus CPU overtemp alarms, hardware problems (bad voltage regulators on chassis interface boards), and even ended up with an IBM 'Top Gun' on site (That's her title. And no, there is no contact info on her business card. Just 'Top Gun'.)

File system management. Maintaining file systems with tens of millions of files is a pain, no matter how you slice it.

Things that went right.

We bought

good load balancers right away. The Netscalers have performed nearly flawlessly for 4 years, dishing out a thousand pages per second of proxied, content switched, SSL’d and Gzip’d content.

The application server layer

scales out horizontally quite easily. The combination of proxied load balancing, content switching and stateless application servers allows tremendous flexibility at the app server layer.

We eventually built very

detailed database statistics and reporting engine, similar to Oracle AWR reports. We know, for example, what the top N queries are for CPU, logical I/O, physical I/O. etc, at ten minute intervals any time during the last 90 days.

HP Openview Storage Mirroring (Doubletake) works pretty well. It's keeping 20 million files in sync across a WAN with not too many headaches.

We had a few

people who dedicated person-years of their life to the project, literally sleeping next to their laptops, going for years without ever being more than arms reach from the project. And they don’t get stock options.

I ended up with a couple

quotable phrases to my credit.

On Windows 2003 and SQL server:

"It doesn't suck as bad as I thought it would"

and

"It's displayed an unexpected level of robustness"

Lessons:

Details matter. Enough said.

Horizontal beats vertical. We know that. So does everyone else in the world. Except perhaps our application vendor. The application is still not horizontally scalable at the database tier and the database vendor still doesn't provide a RAC like horizontally scalable option. Shards are not an option. That will limit future scalability.

Monitoring matters. Knowing what to monitor and when to monitor it is essential to both proactive and reactive application and database support.

AWR-like reports matter. We have consistently decreased the per-unit load on the back end database by continuously pounding down the top 'N' queries and stored procedures. The application vendor gets a steady supply of data from us. They roll tweaks and fixes from their customers into their normal maintenance release cycle. It took a few years, but they

really do look at their customers' performance data and develop fixes. We fed the vendor data all summer. They responded with maintenance releases, hot fixes and patches that reduced database load by at least 30%. Other vendors take note. Please.

Vendor support matters. We had an application that had 100,000 users, and we were using per-incident support for the database and operating system rather than premier support. That didn't work. But it did let us make a somewhat

amusing joke at the expense of some poor first tier help desk person.

Don’t be the largest installation. You’ll be the load test site.

Related Posts:

The

Quarter Million Dollar Query Unlimited Resources Naked Without Strip Charts [1] For our purposes, a page is a URL with active content that connects to the database and has at least some business logic.